From Bulk Export to AI-ready Security Workflows: Introducing Rapid7’s Open-Source MCP Server and Agent Skill

Security teams want more from their data than APIs and one-off reports.

They want to ask better questions, move faster, and bring security context into the workflows they are already building. That’s especially true as more organizations experiment with private AI assistants, internal copilots, and LLM-powered automation. Part of this experimentation is, of course, attempting to lower the pressure on teams that have to figure out how to prioritize the sheer number of actionable vulnerabilities efforts like Project Glasswing are quickly becoming hyper-skilled at spotting.

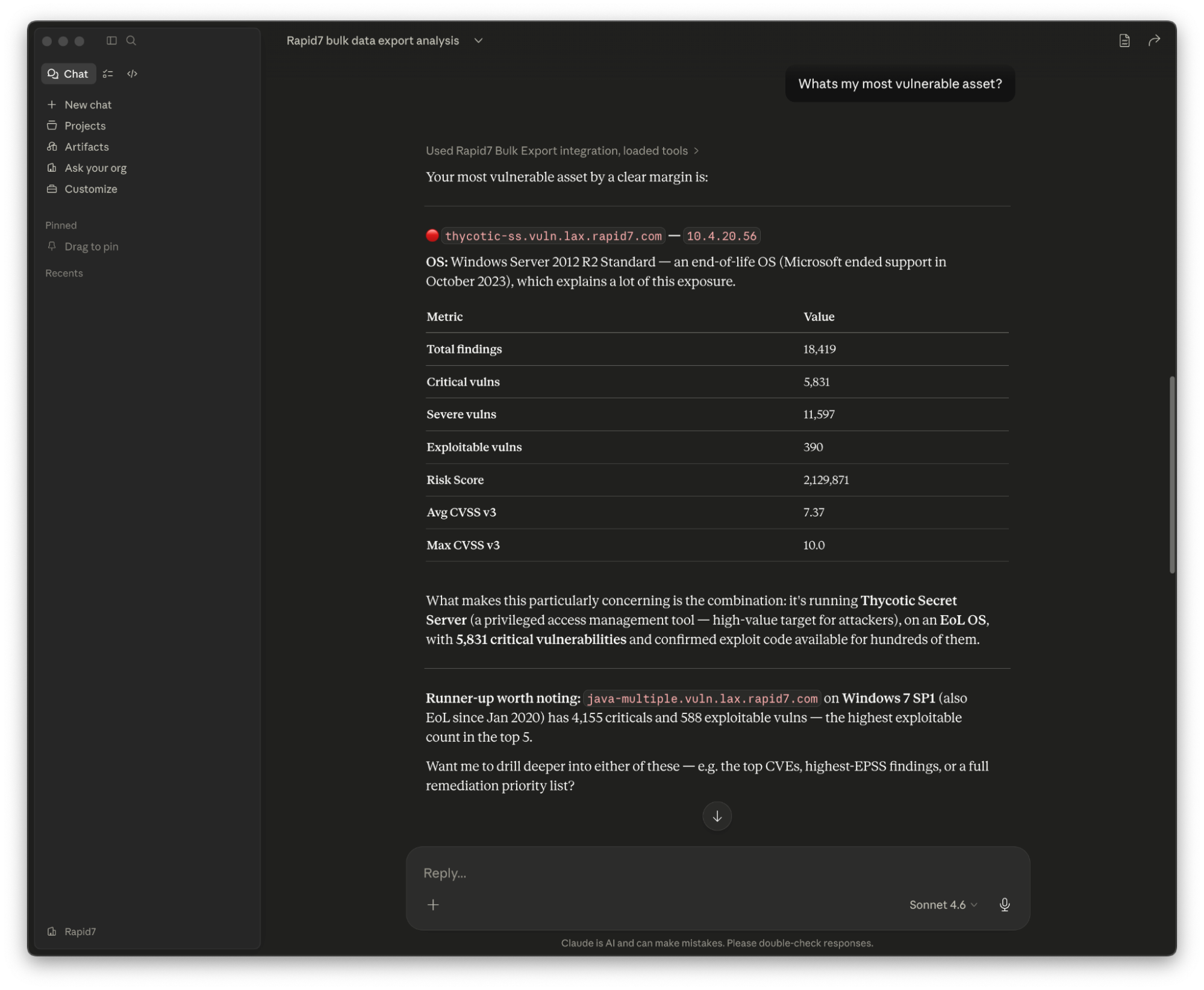

That’s why Rapid7 is introducing a free, open-source MCP Server and Agent Skill for Bulk Export. Bulk export is a highly efficient way to access all your Rapid7 data; no more paging APIs, no more verbose output. Bulk Export creates a local offline replica of your data the LLM can efficiently and quickly interrogate, reducing token cost and time to answer questions.

This new MCP and Agent Skill gives customers a standardized way to connect Rapid7 vulnerability and exposure data to AI assistants and custom AI workflows. Built as an open-source bridge, it helps customers bring their Rapid7 data into the tools and experiences that work best for their teams.

Why this matters now

Security teams are no longer just buying tools. They’re connecting systems, shaping workflows, and testing how AI can help analysts, IT teams, and leaders get to answers faster. For many teams, the path from raw security data to usable AI context is still manual. It often means exporting data, building wrappers, shaping queries, and managing custom integrations.

Rather than leave every team to solve that challenge from scratch, we wanted to provide a stronger foundation that is flexible, practical, and easy to extend over time. With projects like Metasploit and Velociraptor, Rapid7 is committed to Open Source, and by sharing with the broader community we hope to accelerate velocity and ensure we’re able to incorporate more use cases and fixes. These processes also give customers full visibility of the code running and tools used, ensuring data privacy and allowing the user to do with their data what they please.

What MCP does

Model Context Protocol, or MCP, is an emerging standard for helping AI systems interact with external data and tools in a structured way.

In practical terms, it gives AI assistants a cleaner way to ask questions, retrieve data, and work with systems beyond the model itself. For customers, that means less custom glue code and a more consistent way to use security telemetry in AI-driven workflows.

That matters because many security reporting and analysis workflows still assume a high technical bar. Answering a simple question can require custom queries, SQL knowledge, or dashboard work. But the people who need those answers aren’t always security specialists. They may be IT partners, compliance stakeholders, or executives who want clarity but might not need to understand the underlying query logic.

The MCP server helps lower that barrier: Instead of starting with raw exports and working backward, teams can start with the question they need answered.

The bigger picture: MCP and CTEM

This approach also aligns with the broader shift toward continuous threat exposure management, or CTEM.

CTEM is about helping teams move beyond point-in-time findings toward a more continuous, contextual understanding of risk. That requires security data that can be accessed, connected, and used across the workflows teams rely on.

Bulk Export helps make that possible by giving customers more flexibility in how they use Rapid7 data. The open-source MCP server makes it easier to bring that data into AI-assisted and custom workflows.

⠀

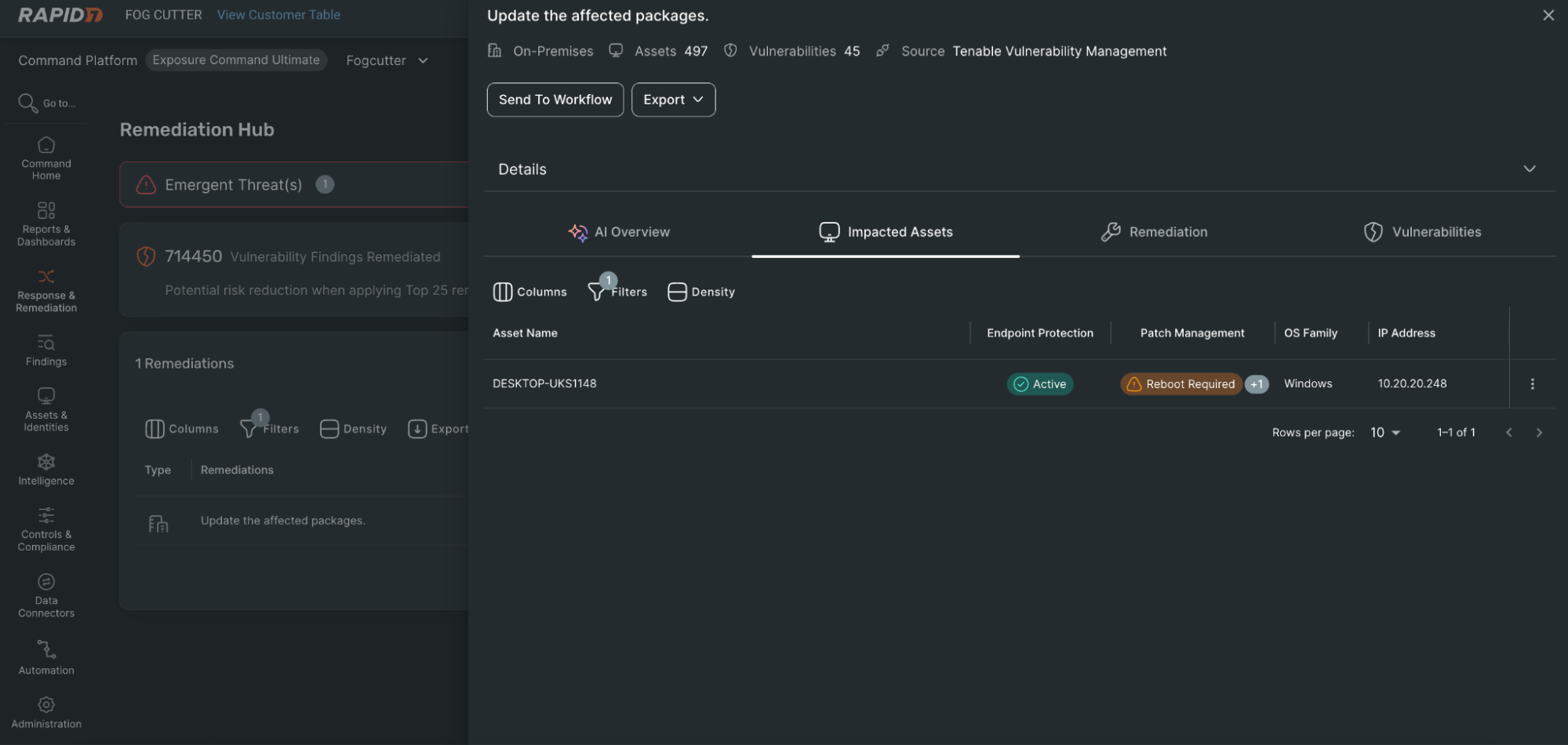

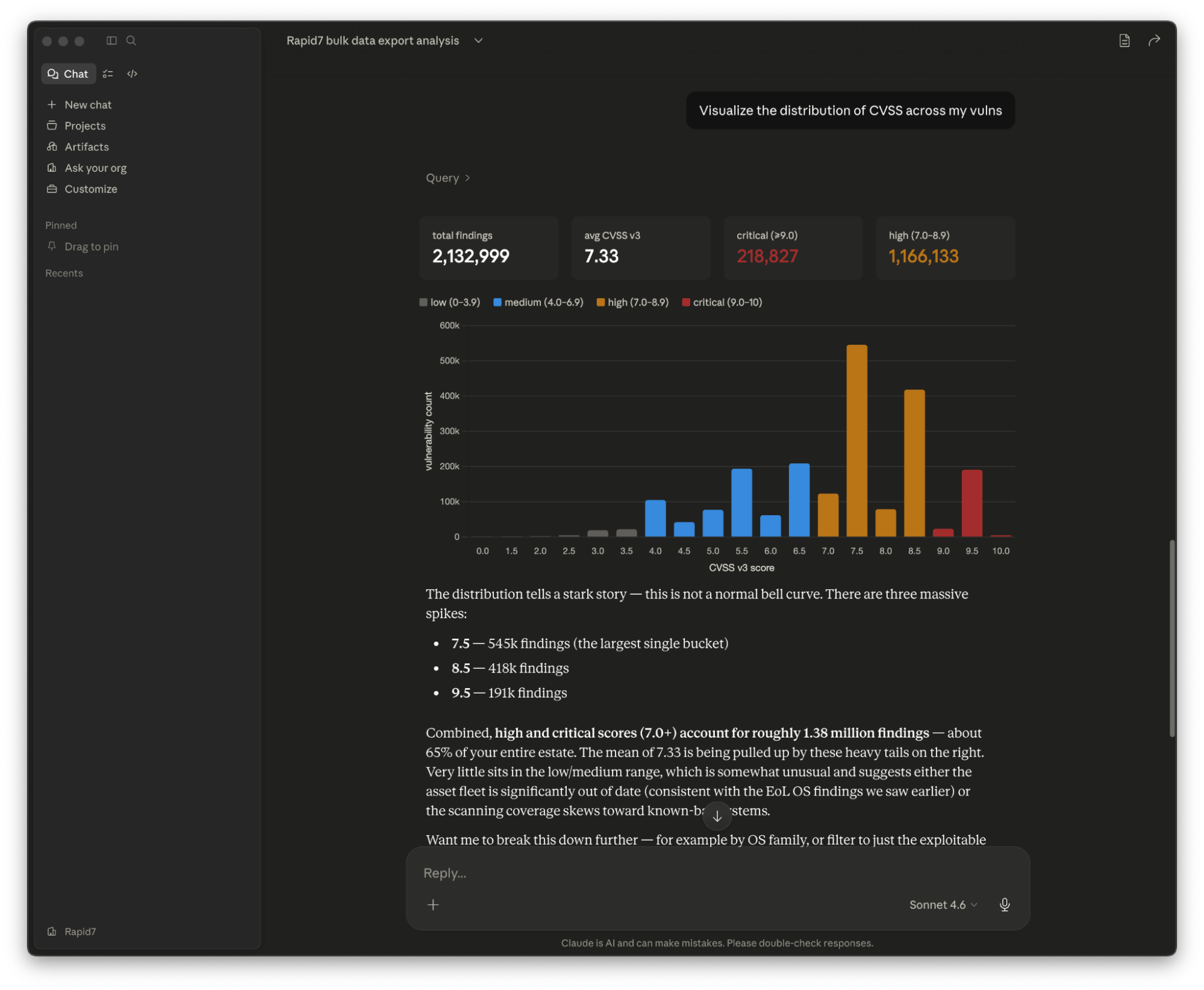

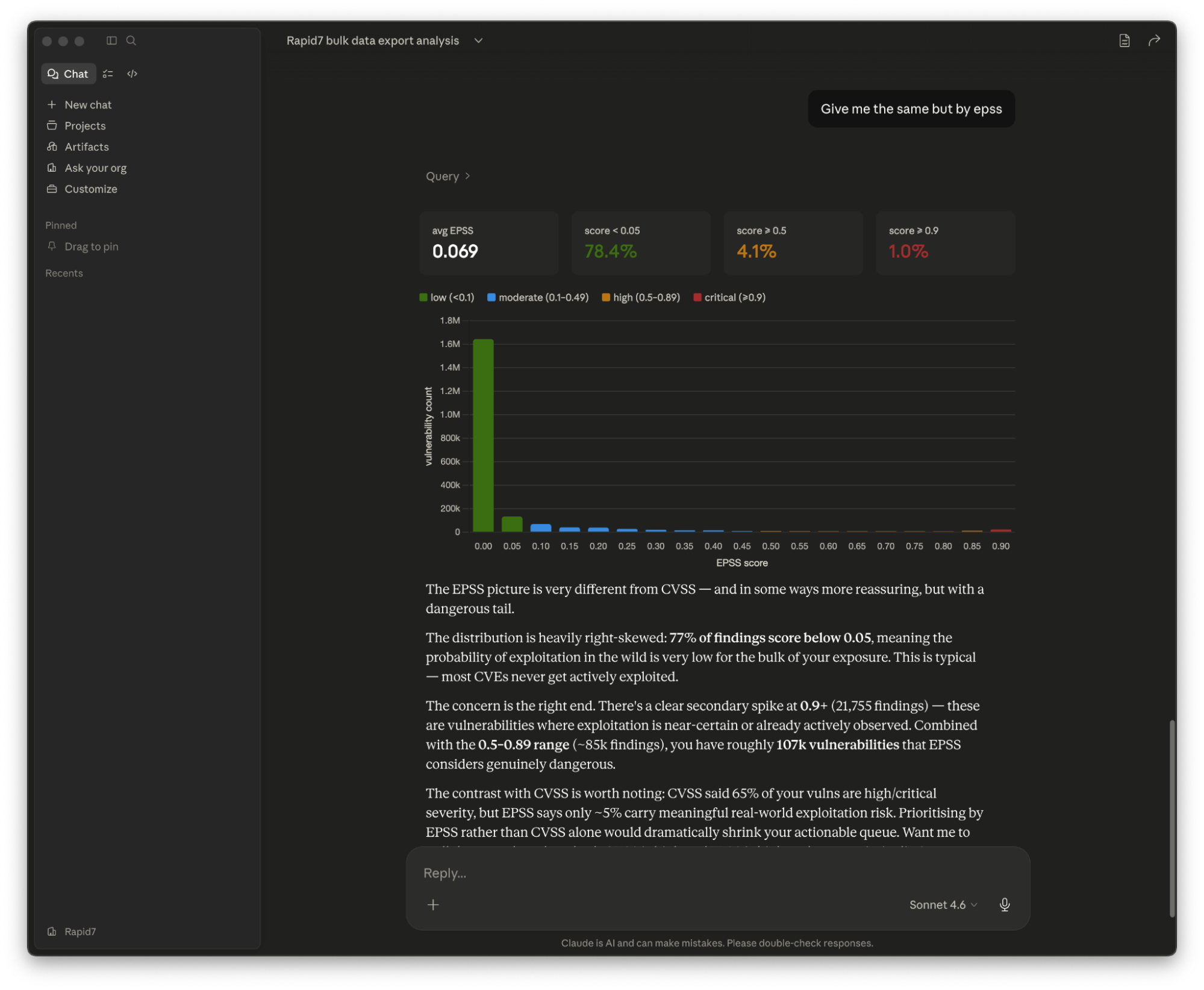

That can support more continuous exposure management workflows by making it easier for teams to triage vulnerability and exposure data. For example, an analyst facing a large queue of new vulnerabilities could use LLM assistance to quickly narrow in on the findings most likely to need attention first. Instead of manually working through exports and queries, they could ask natural-language questions to surface the exposures tied to critical assets, unresolved remediation work, or other signals available in the data.

From data portability to AI-ready interoperability

Bulk Export was already an important step toward giving customers more control over their data. It made it easier to extract and use security telemetry in external tools and analytics environments.

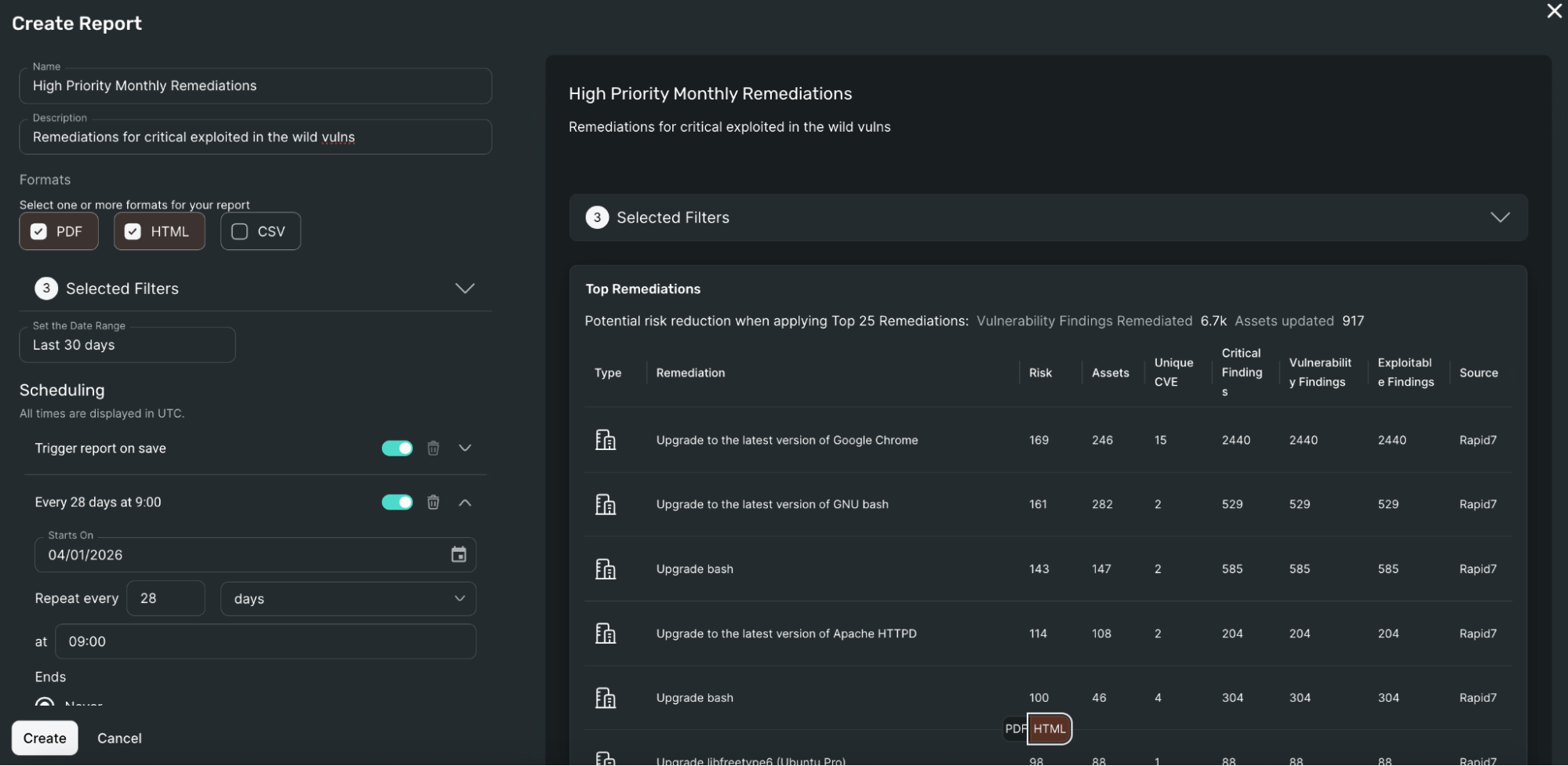

The open-source MCP server builds on that foundation: Instead of using exported data only for dashboards or custom reporting, customers can now use that same data in AI-native experiences. That includes internal assistants, private copilots, workflow automation, and natural-language exploration of vulnerability and exposure data. This makes existing security data easier to use in the environments customers are already investing in.

How it works

At a high level, the architecture is straightforward. Using the Agent Skill, your LLM runs the MCP server locally and automatically prepares the environment by performing the bulk export and loading the data into a local file store. The Agent Skill provides the schemas and knowledge, with the MCP providing the tools to access this data. The LLM then will answer any question by querying, summarizing, and synthesising data locally – an extremely fast and simple process that's for the LLM.

Depending on the data a customer exports, answers can include vulnerability records, asset data, remediated vulnerabilities, and policy-related results.

The point here isn't just that a model can access the data, it’s that an open-source layer helps customers inspect, adapt, and extend over time, empowering teams to control how that connection works in their own environment.

What customers can do with it

This opens the door to practical use cases, including:

Using LLM assistance to triage vulnerability data faster

Asking natural-language questions to spot exposure and remediation trends

Investigating which assets are tied to the most urgent vulnerabilities

Understanding what changed over time without manual analysis

Exploring policy failures without building manual queries

Feeding Rapid7 telemetry into private AI assistants and internal workflows

Making reporting more accessible for non-technical stakeholders

⠀

For teams already trying to operationalize AI, this creates a lower-friction path. Instead of building every integration from the ground up, they can start with a reusable bridge and focus on the workflows they want to enable.

A better path from data to action

Security data only creates value when teams can use it. For many organizations, turning raw telemetry into timely answers is still harder than it should be. Analysts need speed. Leaders need clarity. Builders need flexibility. And more customers want security data that works inside the tools and workflows they already rely on.

The open-source MCP server for Bulk Export is designed to help make that possible.

Bulk Export helps customers take control of their data. This is the next step: helping them put that data to work in AI-ready security workflows.

Ready to explore it for yourself? Visit the Rapid7 Bulk Export MCP Server project on GitHub to learn more and get started.