16% of Parents Help Their Children Bypass Online Age Checks, Study Finds. One 15-Year-Old Just Uses a Fake Moustache

Read more of this story at Slashdot.

Read more of this story at Slashdot.

U.S. and international government agencies warned Thursday about a “widespread shift” in Chinese hacker methods toward the use of large-scale covert networks that compromise common devices to carry out a variety of attacks.

The advisory details how those networks work, and defensive steps organizations should take.

“Over the past few years there has been a major shift in the tactics, techniques and procedures (TTPs) used by China-nexus cyber actors, moving away from the use of individually procured infrastructure, and towards the use of externally provisioned, large-scale networks of compromised devices,” the warning reads.

The U.K. National Cyber Security Centre, Cybersecurity and Infrastructure Security Agency, National Security Agency, FBI and agencies from Australia, Canada, Germany, Netherlands, New Zealand, Japan, Spain and Sweden joined forces on the advisory.

It says that “multiple covert networks have been created and are being constantly updated, and that a single covert network could be being used by multiple actors. These networks are mainly made up of compromised Small Office Home Office (SOHO) routers, as well as Internet of Things (IoT) and smart devices.”

It continues: “Covert networks are used to connect across the internet in a low-cost, low-risk, deniable way, disguising the origin and attribution of malicious activity.”

Chinese information security companies create and support the networks, evidence suggests, according to the agencies. Hackers use the networks for reconnaissance, malware delivery and stealing information, they said.

Examples of the use of covert networks include activities from groups known as Volt Typhoon to pre-position on U.S. critical infrastructure, and Flax Typhoon to conduct cyber espionage.

An example of a covert network is the botnet Raptor Train, which infected 200,000 devices worldwide. The networks are large, constantly evolving and with new ones being developed constantly.

At a speech this week, NCSC CEO Richard Horne said “we know that China’s intelligence and military agencies now display an eye-watering level of sophistication in their cyber operations.”

Defenses against covert networks aren’t “straightforward,” according to the advisory, but include an assortment of common good cybersecurity practices. The largest and most at-risk organizations should engage in active hunting, tracking and mapping covert networks, using threat reporting to create blocklists and more.

“Working closely with U.S. and international partners, CISA continues to identify and warn organizations of Chinese state-sponsored cyber actors threatening critical infrastructure,” CISA Acting Director Nick Andersen said Thursday. “This advisory informs organizations of how these actors are strategically using numerous, evolving covert networks at scale for malicious cyber activity.”

The post A dozen allied agencies say China is building covert hacker networks out of everyday routers appeared first on CyberScoop.

British businesses need to prepare themselves to defend against cyberattacks because the U.K. could be targeted “at scale,” if it became involved in an international conflict.

The post Most Serious Cyberattacks Against the UK Now From Russia, Iran and China, Cyber Chief Says appeared first on SecurityWeek.

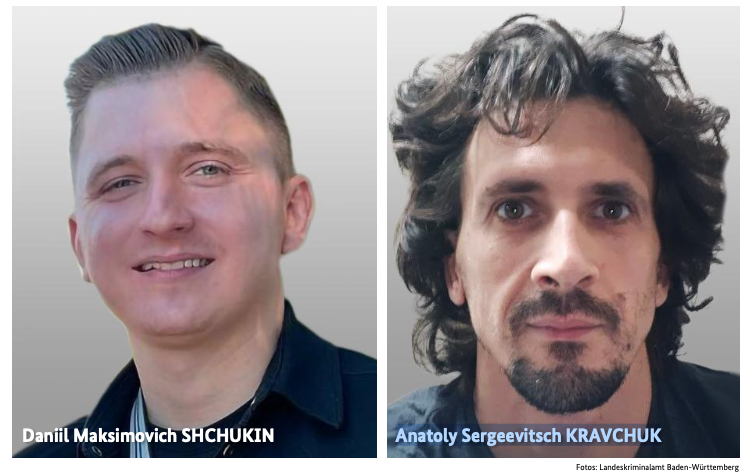

An elusive hacker who went by the handle “UNKN” and ran the early Russian ransomware groups GandCrab and REvil now has a name and a face. Authorities in Germany say 31-year-old Russian Daniil Maksimovich Shchukin headed both cybercrime gangs and helped carry out at least 130 acts of computer sabotage and extortion against victims across the country between 2019 and 2021.

Shchukin was named as UNKN (a.k.a. UNKNOWN) in an advisory published by the German Federal Criminal Police (the “Bundeskriminalamt” or BKA for short). The BKA said Shchukin and another Russian — 43-year-old Anatoly Sergeevitsch Kravchuk — extorted nearly $2 million euros across two dozen cyberattacks that caused more than 35 million euros in total economic damage.

Daniil Maksimovich SHCHUKIN, a.k.a. UNKN, and Anatoly Sergeevitsch Karvchuk, alleged leaders of the GandCrab and REvil ransomware groups.

Germany’s BKA said Shchukin acted as the head of one of the largest worldwide operating ransomware groups GandCrab and REvil, which pioneered the practice of double extortion — charging victims once for a key needed to unlock hacked systems, and a separate payment in exchange for a promise not to publish stolen data.

Shchukin’s name appeared in a Feb. 2023 filing (PDF) from the U.S. Justice Department seeking the seizure of various cryptocurrency accounts associated with proceeds from the REvil ransomware gang’s activities. The government said the digital wallet tied to Shchukin contained more than $317,000 in ill-gotten cryptocurrency.

The GandCrab ransomware affiliate program first surfaced in January 2018, and paid enterprising hackers huge shares of the profits just for hacking into user accounts at major corporations. The GandCrab team would then try to expand that access, often siphoning vast amounts of sensitive and internal documents in the process. The malware’s curators shipped five major revisions to the GandCrab code, each corresponding with sneaky new features and bug fixes aimed at thwarting the efforts of computer security firms to stymie the spread of the malware.

On May 31, 2019, the GandCrab team announced the group was shutting down after extorting more than $2 billion from victims. “We are a living proof that you can do evil and get off scot-free,” GandCrab’s farewell address famously quipped. “We have proved that one can make a lifetime of money in one year. We have proved that you can become number one by general admission, not in your own conceit.”

The REvil ransomware affiliate program materialized around the same as GandCrab’s demise, fronted by a user named UNKNOWN who announced on a Russian cybercrime forum that he’d deposited $1 million in the forum’s escrow to show he meant business. By this time, many cybersecurity experts had concluded REvil was little more than a reorganization of GandCrab.

UNKNOWN also gave an interview to Dmitry Smilyanets, a former malicious hacker hired by Recorded Future, wherein UNKNOWN described a rags-to-riches tale unencumbered by ethics and morals.

“As a child, I scrounged through the trash heaps and smoked cigarette butts,” UNKNOWN told Recorded Future. “I walked 10 km one way to the school. I wore the same clothes for six months. In my youth, in a communal apartment, I didn’t eat for two or even three days. Now I am a millionaire.”

As described in The Ransomware Hunting Team by Renee Dudley and Daniel Golden, UNKNOWN and REvil reinvested significant earnings into improving their success and mirroring practices of legitimate businesses. The authors wrote:

“Just as a real-world manufacturer might hire other companies to handle logistics or web design, ransomware developers increasingly outsourced tasks beyond their purview, focusing instead on improving the quality of their ransomware. The higher quality ransomware—which, in many cases, the Hunting Team could not break—resulted in more and higher pay-outs from victims. The monumental payments enabled gangs to reinvest in their enterprises. They hired more specialists, and their success accelerated.”

“Criminals raced to join the booming ransomware economy. Underworld ancillary service providers sprouted or pivoted from other criminal work to meet developers’ demand for customized support. Partnering with gangs like GandCrab, ‘cryptor’ providers ensured ransomware could not be detected by standard anti-malware scanners. ‘Initial access brokerages’ specialized in stealing credentials and finding vulnerabilities in target networks, selling that access to ransomware operators and affiliates. Bitcoin “tumblers” offered discounts to gangs that used them as a preferred vendor for laundering ransom payments. Some contractors were open to working with any gang, while others entered exclusive partnerships.”

REvil would evolve into a feared “big-game-hunting” machine capable of extracting hefty extortion payments from victims, largely going after organizations with more than $100 million in annual revenues and fat new cyber insurance policies that were known to pay out.

Over the July 4, 2021 weekend in the United States, REvil hacked into and extorted Kaseya, a company that handled IT operations for more than 1,500 businesses, nonprofits and government agencies. The FBI would later announce they’d infiltrated the ransomware group’s servers prior to the Kaseya hack but couldn’t tip their hand at the time. REvil never recovered from that core compromise, or from the FBI’s release of a free decryption key for REvil victims who couldn’t or didn’t pay.

Shchukin is from Krasnodar, Russia and is thought to reside there, the BKA said.

“Based on the investigations so far, it is assumed that the wanted person is abroad, presumably in Russia,” the BKA advised. “Travel behaviour cannot be ruled out.”

There is little that connects Shchukin to UNKNOWN’s various accounts on the Russian crime forums. But a review of the Russian crime forums indexed by the cyber intelligence firm Intel 471 shows there is plenty connecting Shchukin to a hacker identity called “Ger0in” who operated large botnets and sold “installs” — allowing other cybercriminals to rapidly deploy malware of their choice to thousands of PCs in one go. However, Ger0in was only active between 2010 and 2011, well before UNKNOWN’s appearance as the REvil front man.

A review of the mugshots released by the BKA at the image comparison site Pimeyes found a match on this birthday celebration from 2023, which features a young man named Daniel wearing the same fancy watch as in the BKA photos.

Update, April 6, 12:06 p.m. ET: A reader forwarded this English-dubbed audio recording from a ccc.de (37C3) conference talk in Germany from 2023 that previously outed Shchukin as the REvil leader (Shchuckin is mentioned at around 24:25).

Read more of this story at Slashdot.

Read more of this story at Slashdot.

The UK’s top internet regulator opened a formal investigation into social media network X after users, with the help of its AI chatbot Grok, flooded the site with nonconsensual, AI-manipulated nude and undressed photos of real people.

On Monday, the Office of Communications (Ofcom), which regulates internet and telecommunications companies, said the investigation will determine whether the content violates The UK Online Safety Act.

Ofcom said the investigation will focus on whether X has complied with portions of the law requiring them to assess the risk of whether such content will reach UK audiences online, take steps prevent the distribution of nonconsensual images and child sexual abuse material (CSAM), take down illegal content and protect user privacy.

It will also focus on whether X took steps to evaluate the risk that Grok’s deepfake capabilities would pose to UK children or use age verification features to block children from access or seeing the content. The regulator said it continues to engage with officials at X, who will have “an opportunity to respond to our findings in full, as required by the Act, before we make our final decision.”

“Reports of Grok being used to create and share illegal non-consensual intimate images and child sexual abuse material on X have been deeply concerning,” an Ofcom spokesperson said in a statement. “Platforms must protect people in the UK from content that’s illegal in the UK, and we won’t hesitate to investigate where we suspect companies are failing in their duties, especially where there’s a risk of harm to children.”

Ofcom also specified that it is a regulatory body, not a government censor, and the purpose of the inquiry is to determine whether X is breaking the law by facilitating the spread of nonconsensual deepfake pornography, including that of children.

Last week, Prime Minister Keir Starmer of the ruling Labour Party called the deepfake scandal “disgusting” and said all options, including banning X from Britain, were on the table.

Following the investigation, the regulator will determine if X has failed to comply with the Online Safety Act and issue a provisional sanction. Beyond possible legal orders compelling X to change Grok and its business practices, the sanctions could include fines of up to £18 million or 10% of the company’s worldwide revenue.Ofcom said it has used its newfound powers under the Online Safety Act – first implemented last year – to launch investigations into more than 90 platforms, issue fines to six companies for failure to have “robust” age verification technology, and issued its first £1 million fine.

But the investigation and potential sanctions of X, based in the U.S. and owned by the richest person in the world, will mark the most significant test yet of the regulatory agency’s authority under the new law. Thus far the U.S. The Department of Justice and the Federal Trade Commission have been silent as outrage from users and international governments continues to grow.

The post British regulator Ofcom opens investigation into X appeared first on CyberScoop.

A trio of Senate Democrats are calling on Apple and Google to drop Elon Musk’s X from app stores as international regulators in Europe and Britain took steps towards investigations of the site’s mass undressing of users using Grok’s AI tool.

On Friday, Senators Ron Wyden, D-Ore., Ben Ray Luján, D-N.M., and Ed Markey, D-Mass., wrote to Apple’s and Google’s chief executives, asking them to “enforce your apps stores’ terms of service against X.”

“X’s generation of these harmful and likely illegal depictions of women and children has shown complete disregard for your stores’ distribution terms,” they wrote.

The Senators quote from Google Play Store’s terms of service stating that apps must “prohibit users from creating, uploading, or distributing content that facilitates the exploitation or abuse of children” and subject them to immediate removal for violations. Apple’s terms allow wide flexibility to take action on apps or content that are “offensive” or “just plain creepy,” something they argued should clearly cover what is happening on X.

“There can be no mistake about X’s knowledge, and, at best, negligent response to these trends,” the lawmakers wrote.

The lawmakers explicitly compared the lack of action or comments from both companies thus far to the way the stores treated apps meant to track Immigrations and Customs Enforcement operations around the country, such as ICEBlock and Red Dot.

“Unlike Grok’s sickening content generation, these apps were not creating or hosting harmful or illegal content, and yet, based entirely on the Administration’s claims that they posed a risk to immigration enforcers, you removed them from your stores,” the Senators noted.

The call comes as international regulators have turned up the heat on X over the scandal, while conflicting reports swirl about the extent to which X has limited Grok’s deepfake functionality after weeks of criticism.

The UK’s Office of Communications, the nation’s top communications regulatory agency, said it had made “urgent” contact with X over the images being generated by users through Grok, and that based on their response, “we will undertake a swift assessment to determine whether there are potential compliance issues” under the UK Online Safety Act. Friday, Prime Minister Keir Starmer called the images “unlawful” and “disgusting” and promised that all options, including a potential ban of X, were being considered.

Meanwhile, the European Union has ordered X to preserve all documents related to Grok through 2026, an indication that it could be subject to regulatory or law enforcement investigations, according to Reuters.

As CyberScoop and others have reported, legal experts have said that Musk may be exposing X to broad legal and regulatory risks from states, federal regulators and law enforcement.

There have been conflicting reports that X, which has not responded to inquiries from journalists under Musk’s ownership, may be taking steps to limit Grok’s deepfake functionality for some of its users.

On Friday, Musk posted on X that he was limiting the feature to paid users, which has resulted in a fresh round of outrage from observers who pointed out that monetizing illegal sexual deepfakes was not a solution to the problem. Prior to that statement, the only public response from Musk addressing the scandal was a post he made with “cry-laughing” emojis in response to a Grok-generated deepfake of himself wearing a bikini.

Musk doesn’t release numbers around paid subscribers, but a TechCrunch analysis indicates that it could be as high as 1.3-3.7 million users based on revenues reported from in-app purchases.

But even the claim that non-paying users are shut out from making further sexualized deepfakes through Grok may be inaccurate, as users on social media reported that even after the change, they were able to access Grok’s deepfake feature as a free user through X or Grok’s website.

The post Dems pressure Google, Apple to drop X app as international regulators turn up heat appeared first on CyberScoop.

Microsoft today pushed updates to fix at least 56 security flaws in its Windows operating systems and supported software. This final Patch Tuesday of 2025 tackles one zero-day bug that is already being exploited, as well as two publicly disclosed vulnerabilities.

Despite releasing a lower-than-normal number of security updates these past few months, Microsoft patched a whopping 1,129 vulnerabilities in 2025, an 11.9% increase from 2024. According to Satnam Narang at Tenable, this year marks the second consecutive year that Microsoft patched over one thousand vulnerabilities, and the third time it has done so since its inception.

The zero-day flaw patched today is CVE-2025-62221, a privilege escalation vulnerability affecting Windows 10 and later editions. The weakness resides in a component called the “Windows Cloud Files Mini Filter Driver” — a system driver that enables cloud applications to access file system functionalities.

“This is particularly concerning, as the mini filter is integral to services like OneDrive, Google Drive, and iCloud, and remains a core Windows component, even if none of those apps were installed,” said Adam Barnett, lead software engineer at Rapid7.

Only three of the flaws patched today earned Microsoft’s most-dire “critical” rating: Both CVE-2025-62554 and CVE-2025-62557 involve Microsoft Office, and both can exploited merely by viewing a booby-trapped email message in the Preview Pane. Another critical bug — CVE-2025-62562 — involves Microsoft Outlook, although Redmond says the Preview Pane is not an attack vector with this one.

But according to Microsoft, the vulnerabilities most likely to be exploited from this month’s patch batch are other (non-critical) privilege escalation bugs, including:

–CVE-2025-62458 — Win32k

–CVE-2025-62470 — Windows Common Log File System Driver

–CVE-2025-62472 — Windows Remote Access Connection Manager

–CVE-2025-59516 — Windows Storage VSP Driver

–CVE-2025-59517 — Windows Storage VSP Driver

Kev Breen, senior director of threat research at Immersive, said privilege escalation flaws are observed in almost every incident involving host compromises.

“We don’t know why Microsoft has marked these specifically as more likely, but the majority of these components have historically been exploited in the wild or have enough technical detail on previous CVEs that it would be easier for threat actors to weaponize these,” Breen said. “Either way, while not actively being exploited, these should be patched sooner rather than later.”

One of the more interesting vulnerabilities patched this month is CVE-2025-64671, a remote code execution flaw in the Github Copilot Plugin for Jetbrains AI-based coding assistant that is used by Microsoft and GitHub. Breen said this flaw would allow attackers to execute arbitrary code by tricking the large language model (LLM) into running commands that bypass the user’s “auto-approve” settings.

CVE-2025-64671 is part of a broader, more systemic security crisis that security researcher Ari Marzuk has branded IDEsaster (IDE stands for “integrated development environment”), which encompasses more than 30 separate vulnerabilities reported in nearly a dozen market-leading AI coding platforms, including Cursor, Windsurf, Gemini CLI, and Claude Code.

The other publicly-disclosed vulnerability patched today is CVE-2025-54100, a remote code execution bug in Windows Powershell on Windows Server 2008 and later that allows an unauthenticated attacker to run code in the security context of the user.

For anyone seeking a more granular breakdown of the security updates Microsoft pushed today, check out the roundup at the SANS Internet Storm Center. As always, please leave a note in the comments if you experience problems applying any of this month’s Windows patches.

The UK’s top cyber agency issued a warning to the public Monday: large language model AI tools may always contain a persistent flaw that allows malicious actors to hijack models and potentially weaponize them against users.

When ChatGPT launched in 2022, security researchers began testing the tool and other LLMs for functionality, security and privacy. They very quickly identified a fundamental deficiency: because these models treat all prompts as instructions, they can be easily manipulated through simple techniques that would typically only succeed against young children.

Known as prompt injection, this technique works by sending malicious requests to the AI in the form of instructions, allowing bad actors to blow past any internal guardrails that developers had put in place to prevent models from taking harmful or dangerous actions.

In a blog post Monday—three years after ChatGPT’s debut—the UK’s top cybersecurity agency warned that prompt injection is inextricably intertwined in LLMs’ architecture, making the problem impossible to eliminate entirely.

The National Cyber Security Centre’s technical director for platforms research said this is because, at their core, these large language models do not make any distinction between trusted and untrusted content they encounter.

“Current large language models (LLMs) simply do not enforce a security boundary between instructions and data inside a prompt,” wrote David C (the NCSC does not publish its director’s full name in public releases).

Instead these models “concatenate their own instructions with untrusted content in a single prompt, and then treat the model’s response as if there were a robust boundary between ‘what the app asked for’ and anything in the untrusted content,” he wrote.

While there may be a temptation to compare prompt injection to other kinds of manageable attacks, like SQL injection, which also deal with web pages incorrectly handling data and instructions, the English expert said he believes prompt injections are substantively worse in important ways.

Because these algorithms operate solely through pattern matching and prediction, they cannot distinguish between different inputs. The models lack the ability to assess whether the information is trustworthy, or if the input is merely something the program should process and store or treat as active instructions for its next task.

“Under the hood of an LLM, there’s no distinction made between ‘data’ or ‘instructions’; there is only ever ‘next token,’” the author wrote. “When you provide an LLM prompt, it doesn’t understand the text in the way a person does. It is simply predicting the most likely next token from the text so far.

Because of this, “it’s very possible that prompt injection attacks may never be totally mitigated in the way that SQL injection attacks can be,” he wrote.

The NCSC’s findings align with what some independent researchers and even AI companies have been saying: that problems like prompt injections, jailbreaking and hallucinations may never fully be solved. And when these models pull content from the internet, or from external parties to complete tasks, there will always be a danger that such content will be treated as a direct instruction from its owners or administrators.

On software repositories like GitHub, major AI coding tools from Open AI and Anthropic have been integrated into automated software development workflows. These integrations created a vulnerability: maintainers—and in some cases, external contributors—could embed malicious prompts within standard development elements like commit messages and pull requests. The LLM would then treat these prompts as legitimate instructions.

While some of the models could only execute major tasks with human approval, the researchers said this too could be circumvented with a one-line prompt.

Meanwhile, AI browser agents that are meant to help users and businesses shop, communicate and do research online have been found to be similarly vulnerable to many of the same problems.

Researchers found they could sometimes piggyback off ChatGPT’s browser authentication protocols to inject hidden instructions into the LLM’s memory and achieve remote code execution privileges.

Other researchers have created web pages that served different content to AI crawlers visiting their website, influencing the model’s internal evaluations with untrusted content.

AI companies have increasingly acknowledged the enduring nature of these weaknesses in LLM technology, though they claim to be working on solutions.

In September, OpenAI published a paper claiming that hallucinations are a solvable problem. According to the research, hallucinations occur because of how developers train and evaluate these models: large language models are penalized when they express uncertainty over giving confident answers, even if the confident answers are wrong. For example, if you ask an LLM what your birthday is, an LLM that responds “I don’t know” gets a lower evaluation score than one that guesses any of the possible 365 answers, despite having no way to know the correct answer.

The paper claims that OpenAI’s evaluation for newer models rebalances those incentives, leading to fewer (but nonzero) hallucinations.Companies like Anthropic have said they rely on monitoring of user accounts and other outside detection tools, as opposed to internal guardrails within the models themselves, to identify and combat jailbreaking, which affect nearly all commercial and open source models.

The post UK cyber agency warns LLMs will always be vulnerable to prompt injection appeared first on CyberScoop.

On Monday, more than 60 digital commerce and trade groups called on governments around the globe to reject efforts or requests to weaken or bypass encryption, saying strong encrypted communications provides critical protections for user privacy, secure data protection and trust that underpin some of society’s most important interactions.

“Encryption is a vital tool for ensuring that consumers, businesses and governments can confidentially engage online, fostering a secure environment that supports economic growth and cross-border collaboration,” the groups wrote.

The letter, signed by The App Association, the Business Software Alliance, the Information Technology Industry Council, the Surveillance Technology Oversight Project and others, argues that the tradeoffs in privacy and security to all users would outweigh the benefits to law enforcement, stating “any effort to undermine encryption, whether through backdoors, key escrow systems, or technical mandates, undermines that trust.”

While policymakers in the U.S. and other democracies have been debating the question of “lawful access” to encrypted data for decades, the letter comes as countries in Europe and other parts of the world have made moves over the past year to regulate or mandate some form of legalized access for criminal and national security investigations.

This year, Apple removed its end-to-end encrypted Advanced Data Protection plans from the UK, part of a running dispute with British officials over access to encrypted iCloud data for national security investigations. Over the past three decades, the U.S. and governments around the world have come up with a range of technological proposals for gaining access to encrypted communications for law enforcement and national security investigations: from Clipper Chips to key escrow systems.

In August, Director of National Intelligence Tulsi Gabbard claimed to have persuaded British officials to reverse their position, but the next month Apple reiterated its plans to remove the advanced encryption plan from UK devices, saying it “remains committed to offering our users the highest level of security for their personal data and we are hopeful that we will be able to do so in the future in the United Kingdom.”

“As we have said many times before, we have never built a backdoor or master key to any of our products or services and we never will,” Apple’s statement reads.

Across St. George’s Channel, Ireland’s Minister of Justice Jim O’Callaghan is reportedly working on a proposal that would grant access to encrypted data to the An Garda Síochána, the country’s national police and security service.

Details of that proposal have not been publicized, but in a speech in July, O’Callaghan outlined his views on encryption, saying that the right to privacy cannot be allowed to become “sacrosanct” when it comes to law enforcement investigations and that there is “a need to grapple with the question of what data we will permit [police] to access, and what systems, protections and oversights should be in place.”

“None of us would like to imagine living in a surveillance State, with all of our private life – our thoughts, our communications, our interests – being observed and recorded,” O’Callaghan said. “But neither, I think, would we like to imagine people who have taken or plan to take the lives of others continuing to walk free with impunity, as a result of an inability on the part of Gardaí to effectively investigate their crimes.”Last month, the European Union came close to passing a new regulation, called Chat Control, that would have given governments broad authority to mass scan user devices for Child Sexual Abuse Material (CSAM).

Digital groups said the regulation would mark “the end” of privacy in Europe and threaten journalists, human rights activists, political dissidents, domestic abuse survivors and other victims who rely on the technology for legitimate means. Germany, a critical swing vote, later came out against the proposal, and EU proponents canceled the vote.

The post Dozens of groups call for governments to protect encryption appeared first on CyberScoop.