Flaw in Claude’s Chrome extension allowed ‘any’ other plugin to hijack victims’ AI

As businesses and governments turn to AI agents to access the internet and perform higher-level tasks, researchers continue to find serious flaws in large language models that can be exploited by bad actors.

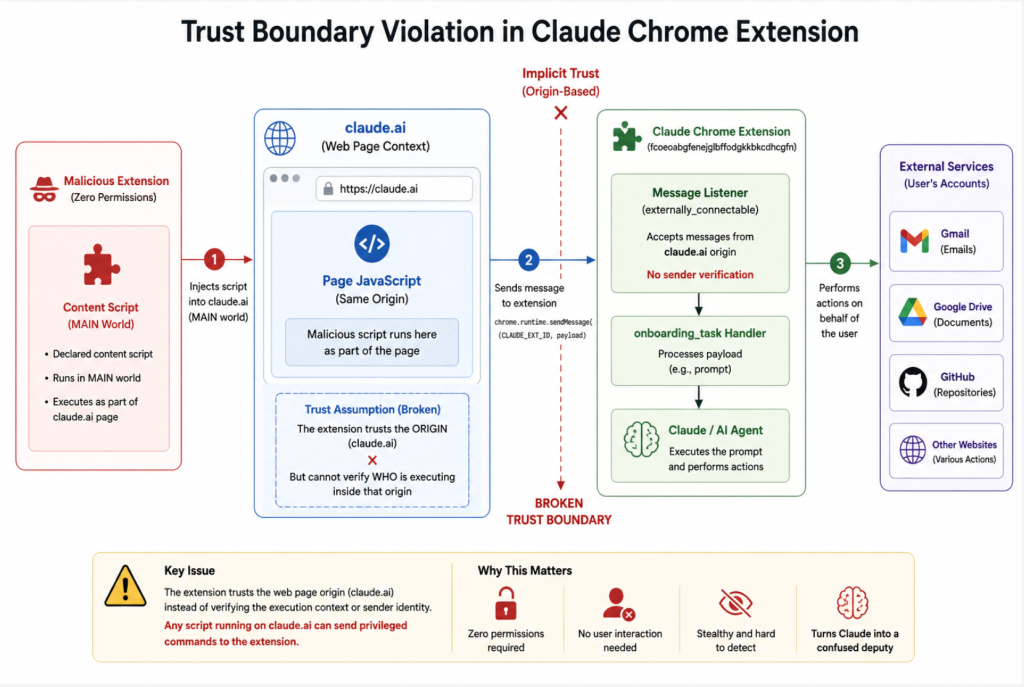

The latest discovery comes from browser security firm LayerX, involving a bug in the Chrome extension for Anthropic’s Claude AI model that allows any other plugin – even ones without special permissions – to embed hidden instructions that can take over the agent.

“The flaw stems from an instruction in the extension’s code that allows any script running in the origin browser to communicate with Claude’s LLM, but does not verify who is running the script,” wrote LayerX senior researcher Aviad Gispan. “As a result, any extension can invoke a content script (which does not require any special permissions) and issue commands to the Claude extension.”

Gispan said he was able to execute any prompt he wanted, blow through Claude’s safety guardrails, evade user confirmation and perform cross-site actions across multiple Google tools. As a proof of concept, LayerX was able to exploit the flaw to extract files from Google Drive folders and share them with unauthorized parties, surveil recent email activity and send emails on behalf of a user, and pilfer private source code from a connected GitHub repository.

The vulnerability “effectively breaks Chrome’s extension security” by creating “a privilege escalation primitive across extensions, something Chrome’s security model is explicitly designed to prevent,” Gispan wrote.

Claude relies on text, user interface semantics, and interpretation of screenshots to make decisions, all things that an attacker can control on the input side. The researchers modified Claude’s user interface to remove labels and indicators around sensitive information, like passwords and sharing feedback, then prompted Claude to share the files with an outside server.

That means cybersecurity defenders often have nothing obviously malicious to detect. Where there is visible activity, the model can be prompted to cover its tracks by deleting emails and other evidence of its actions.

Ax Sharma, Head of Research at Manifold Security, called the vulnerability “a useful demonstration of why monitoring AI agents at the prompt layer is fundamentally insufficient.”

“The most sophisticated part of this attack isn’t the injection, but that the agent’s perceived environment was manipulated to produce actions that looked legitimate from the inside,” said Sharma. “That’s the class of threat the industry needs to be building defenses for.”

Gispan said LayerX reported the flaw to Anthropic on April 27, but claimed the company only issued a “partial” fix to the problem. According to LayerX, Anthropic responded a day later to say that the bug was a duplicate of another vulnerability already being addressed in a future update.

While that fix, issued May 6, introduced new approval flows for privileged actions that made it harder to exploit the same flaw, Gispan said he was still able to take over Claude’s agent in some scenarios.

“Switching to ‘privileged’ mode, even without the user’s notification or consent, enabled circumventing these security checks and injecting prompts into the Claude extension, as before,” Gispan wrote.

Anthropic did not respond to a request for comment from CyberScoop on the research and mitigation efforts.

The post Flaw in Claude’s Chrome extension allowed ‘any’ other plugin to hijack victims’ AI appeared first on CyberScoop.