Introducing Proactive Hardening and Attack Surface Reduction (PHASR) for Linux and macOS

Cloud environments have changed how security teams detect and respond to threats. Signals come from more places, identities are harder to track, and attacks rarely stay within a single system. For many teams, the challenge is no longer visibility. It is having the risk context to understand what matters and act on it quickly. This shift is reflected in the conversations shaping this year’s Rapid7 Global Cybersecurity Summit.

Taking place May 12-13, the summit explores how detection and response are evolving across cloud, identity, and endpoint environments. The focus is practical: how attacks actually unfold, how teams respond under pressure, and how detection strategies need to adapt.

One of the clearest themes across the agenda is that traditional detection models are struggling to keep pace with attackers. Environments are more dynamic, and attackers are more targeted. Catching everything is no longer realistic, and in many cases it is not useful.

Sessions like The New Rules of Detection Engineering will examine this shift in detail. The focus moves away from volume and toward precision. It will ask questions like: What makes a detection meaningful? How should teams prioritize signals? And how can detection strategies support real outcomes rather than just generate alerts? This is especially important in cloud environments, where context changes quickly and signals are often incomplete.

To improve detection, teams need to understand how attacks behave in practice. Several sessions across the summit focus on this directly.

The Reality of Running a SOC in 2026 will explore how modern attacks begin — from identity misuse to cloud misconfigurations— and how they evolve over time. Rather than following a predictable path, attacks move across systems, taking advantage of gaps in visibility and delayed decisions.

This theme continues in sessions like Inside the Modern SOC, where attendees follow a real investigation from first alert to outcome. These walkthroughs show how signals are correlated across environments and how decisions are made when time and clarity are limited.

Cloud security also requires a closer connection between exposure and detection. In many cases, incidents begin long before an alert is triggered.

Sessions such as From Cloud Exposure to Runtime Attack explore how misconfigurations, permissions, and overlooked risks lead to active threats. The focus is on how teams connect exposure insights with runtime behavior to improve prioritization and respond earlier in the attack lifecycle.

This is a practical shift. Detection is no longer a separate function but part of a broader process that starts with understanding exposure and continues through to response.

Across these sessions, a consistent message emerges: Detection strategies need to be grounded in how environments actually behave, not how they are expected to behave.

This means focusing on signal quality rather than volume, connecting data across cloud, identity, and endpoint, and building workflows that support faster decisions. It also means accepting that not all alerts have equal weight, and that prioritization is a core part of modern detection.

Cloud detection is just one part of a broader shift happening across the summit. Sessions on MDR, AI, and exposure management all connect back to the same idea. Security operations must move earlier, reduce noise, and act with greater confidence.

If you are rethinking how your team detects and responds to threats in cloud and hybrid environments, this is where those conversations come together.

Join us May 12–13 and see how security teams are evolving their detection strategies for 2026.

Palo Alto Networks has disclosed the details of its analysis of Google Cloud Platform’s Vertex AI.

The post Google Addresses Vertex Security Issues After Researchers Weaponize AI Agents appeared first on SecurityWeek.

This overview of the basics of Cloud Security includes some tips and resources for getting started in defending the cloud.

The post Cloud Security: Tips and Resources for Securing the Cloud appeared first on Black Hills Information Security, Inc..

After validating stolen credentials using TruffleHog, the hacking group started AWS services enumeration and lateral movement activities.

The post TeamPCP Moves From OSS to AWS Environments appeared first on SecurityWeek.

If you're a security leader operating in Germany, Austria, or Switzerland, you already know that compliance isn't a checkbox. It's a competitive differentiator. Rapid7 has completed BSI C5 Type 2 attestation for the Rapid7 Command Platform, including Threat Command, and it's a milestone worth unpacking.

This isn't just a badge on a webpage. It's proof that our security controls work, not just on paper, but in practice, over time.

The Cloud Computing Compliance Criteria Catalogue (C5) was developed by Germany's Federal Office for Information Security (BSI). It sets some of the most rigorous cloud security standards in the world, covering everything from data protection to operational transparency.

A Type 2 attestation is the gold standard within that framework. Unlike a point-in-time audit, Type 2 validates that security controls aren't just well-designed, but that they're actively working consistently over a sustained period. It's the difference between a security promise and a security proof.

For organizations in the DACH region, C5 is more than a nice-to-have. It's a procurement requirement for German federal agencies, critical infrastructure operators, healthcare institutions, and financial services firms. If you're operating in any of these sectors, your cloud providers need to meet this bar. Rapid7 now does.

Whether you're evaluating security vendors, managing compliance obligations, or looking to strengthen your organization's risk posture, the question is the same: How do you know your cloud security provider actually does what it says?

BSI C5 Type 2 attestation answers that question. It's independent, rigorous, and sustained over time. While rooted in German regulatory requirements, C5 is increasingly recognized as a benchmark for secure cloud operations across Europe. It's one of the clearest signals that a cloud provider has the operational maturity to handle sensitive environments.

The Rapid7 Command Platform unifies exposure management with detection and response, giving security teams clear visibility across their attack surface. Threat Command extends that protection further, identifying and helping remediate threats across the clear, deep, and dark web. Both are now independently validated against one of the world's toughest cloud security frameworks.

Trusting a security vendor shouldn't require a leap of faith. Independent validation exists so you have the evidence to make that call with confidence. This attestation reflects our continued investment in meeting the highest security standards for customers across Germany and the wider European market. Rapid7 has achieved a milestone that speaks directly to the conversations had every day with public sector and enterprise organizations who need more than a promise.

They need proof that a security provider's controls have been tested, verified, and proven to hold up over time. That's the kind of assurance that matters when the stakes are high.

Ready to see the Command Platform in action? Visit Rapid7.com for a free trial.

We are excited to share Rapid7’s recognition in The Forrester Wave™: Cloud Native Application Protection Solutions (CNAPP), Q1 2026 [1]. We see this acknowledgment as a milestone that highlights our strategic evolution and continued drive to help security teams shift from reactive defense to proactive, preemptive response.

Threat actors today know that organizations with static, moment-in-time snapshots of their environments struggle to identify misconfigurations, overprivileged identities, and vulnerabilities in cloud environments. It’s why effective cloud security has shifted from isolated tools that lock down a single container or run a standalone scanner, to platforms that are an integral part of a broader continuous exposure management and threat detection and response (TDR) strategy.

As noted, one of the most critical challenges for modern security teams is protecting their technology stacks with fragmented security tools. That’s why integrations are so important. The Forrester report states: "Rapid7’s outstanding third-party solution integration includes asset management, third-party solutions, bidirectional integration with ticketing systems, SIEM integration, and SOAR and ASPM tool integrations."

To us, this recognition reflects our belief that by integrating deeply with remediation workflows, whether it’s automated ticketing or advanced application security posture management (ASPM), we eliminate the silos that prevent cloud security from becoming a seamless part of an organization’s security operations.

Cloud security does not live in a vacuum; security leaders need to understand the potential impact of cloud or container vulnerabilities and misconfigurations within the wider business and cybersecurity program. Security operations teams need cloud alerts with the relevant context delivered to their tools and workflows. This is why bidirectional integrations and automation are critical in modern security platforms.

Forrester’s evaluation notes: "[Rapid7’s] solid innovation focuses on delivering a unified CNAPP [platform] that helps users protect cloud workloads using temporal intelligence and trending."

We believe this finding underscores our ability to arm security teams with threat and business-centric context. We show how exposures and misconfigurations evolve over time. This empowers organizations to go beyond static snapshots of risk to achieve more proactive and effective remediation. At the core of this capability is our ability to deliver customers an expansive, continuous view of their attack surfaces. Whether an organization monitors their environment with Rapid7 or they utilize third-party scanners, our Command Platform ingests these findings and translates them into actionable remediation plans that set the foundation for automated mobilization.

Since our participation in Forrester’s evaluation process for this report, we have continued to introduce several important new features and innovations. These updates support our customers’ cloud security requirements.

In January 2026, we announced a strategic partnership with ARMO, the creators of Kubescape, to integrate runtime cloud and application security into the Rapid7 Exposure Command Platform.

Security teams are tired of seeing attacks only after the damage is done. By integrating ARMO’s continuous kernel-level observability (eBPF) into our platform, teams now have visibility into cloud behavior, enabling them to differentiate normal cloud activity from legitimate threats at runtime. They can then automatically terminate malicious processes or pause compromised containers to prevent lateral movement.

With Rapid7 cloud security, organizations can shift from seeing 'potential' risk to mitigating ‘active’ threats, fully completing the loop between preemptive security and proactive response.

⠀

[1] The Forrester Wave™: Cloud Native Application Protection Solutions (CNAPP), Q1 2026, Forrester Research, Inc., February 17, 2026.

Forrester does not endorse any company, product, brand, or service included in its research publications and does not advise any person to select the products or services of any company or brand based on the ratings included in such publications. Information is based on the best available resources. Opinions reflect judgment at the time and are subject to change. For more information, read about Forrester’s objectivity here .

The Great Wall of China was built to slow northern raiders and prevent steppe armies from riding straight into the empire’s heart. Yet in 1644, its most impregnable fortress fell without a siege.

At Shanhai Pass, where the wall meets the Bohai Sea, General Wu Sangui commanded the eastern gate. Behind him: a rebel army had just taken Beijing, the emperor was dead, and the Ming Dynasty was buckling under internal crisis. Ahead: Manchu forces who had spent decades probing for weakness. Wu faced the oldest dilemma in fortress warfare: who is the greater threat?

He opened the gate. The Manchus poured through, defeated the rebels, and never left. They founded the Qing Dynasty and ruled China for the next 268 years, the last imperial dynasty before the republic.

The wall didn’t fail. The stone held. What broke was the human system it depended on.

Walls do not fail because the bricks are weak. They fail because the system around the wall is weak. Underpaid guards get bribed, gate procedures degrade, supply lines break. The attacker does not need to knock the wall down when they can walk through the gate.

That is why I disagree with the increasingly popular framing that AI security is fundamentally a cloud infrastructure problem. Cloud security matters. Identity, telemetry, and incident response are table stakes. But treating AI risk as something you can solve primarily by hardening the hosting layer is a comforting simplification, not a complete threat model.

Palo Alto Networks recently reported that 99% of organizations experienced at least one attack on an AI system in the past year. If nearly everyone is getting hit, the right conclusion is not “build a higher wall.” It is “we are defending the wrong boundaries.”

A fortress mindset starts with an implicit assumption: secure the infrastructure and you secure the system. That mental model can work when the system boundary is clean and the workload is deterministic, but AI breaks both assumptions. Modern AI stacks are ecosystems that depend on components sitting outside the neat perimeter even when a model runs inside your cloud tenant: open-source libraries, data pipelines, evaluation tools, plug-ins, agent frameworks, and the humans who can change any of the above. If your security plan begins and ends with cloud controls, you will build excellent defenses around a system that attackers are not planning to assault head-on. Attackers route around strength and target weakness. Why scale the wall when someone at the gate is underpaid, overworked, or facing an impossible choice?

Consider the trust problem. Most organizations are consuming models trained elsewhere, fine-tuning them, and shipping them into production with limited internal expertise. Even if you self-host, you did not write the model or curate the training data. Cloud hardening does not change that reliance; it just makes the box look more secure. The most dangerous failures are not always intrusions but permissioned outcomes. AI agents turn mixed-trust inputs into execution, and when an agent can read internal content, open tickets, or trigger workflows, the attack surface moves to whatever the agent is allowed to do. If an attacker can influence what the agent consumes, they can influence what it executes, often without malware or exploit chains. Real-world AI incidents frequently involve the glue: telemetry, orchestration, plug-ins, and vendor services beside the model.

Humans remain the soft underbelly. AI security discussions obsess over architecture while threat actors obsess over access paths. Cloud controls cannot prevent coercion, social engineering, or insider risk. If a small set of people can approve changes to an AI system’s tools or policies, that is where the attacker will focus.

History teaches this lesson clearly: the Great Wall’s garrison soldiers took payments to allow traders and smugglers to pass, to skip patrols, or to neglect watch duties. Irregular pay and delayed wages made them susceptible. Today, the “bribe” often isn’t cash. It might be a phishing link, a fake vendor request, or pressure to cut corners during a production crisis. The strongest wall doesn’t matter if the person guarding the gate is persuaded to open it.

Begin with a premise security leaders should already accept: everything can be breached. Your job is to reduce the odds, detect threats quickly, and limit the damage when prevention fails.

Threat model the entire AI system, not just where it’s hosted. Include data supply chains, tooling, evaluation pipelines, plug-ins, agents, and the people who can change them. If your threat model leaves out upstream and downstream dependencies, it’s incomplete.

Treat non-human identities as serious risks. Agents, service accounts, and tool credentials should be managed like privileged user accounts. Apply zero standing privileges (no always-on access), just-in-time access, contextual approvals for high-impact actions, and monitoring for unusual tool behavior. The moment you give an agent broad permissions “for productivity,” you’ve created a new control plane that attackers can hijack.

Build audit-grade change control. You should be able to answer: who changed agent permissions, retrieval sources, tool bindings, or policy gates? If those controls can be modified quietly, you’re running a system that’s one rushed change away from becoming a security incident.

Invest in detection that assumes manipulation, not just compromise. The hard part of AI misuse is that malicious actions can look legitimate in traditional logs. You need traceability from input to outcome: what context was fed in, what tool calls happened, what policy was active, and what boundary was crossed.

Cloud security is necessary—but not sufficient. When vendors say AI security is mainly a cloud infrastructure problem, I hear the modern version of “the wall is tall, so we’re safe.” Wu Sangui’s wall was tall too, stretching to the sea at the empire’s edge. None of that mattered when the system around it collapsed. Empires fall from within.

AI security isn’t solved by building a better fortress. It’s solved by governing delegated authority, hardening the supply chain, and building systems that can prove what happened when—not if—something goes wrong.

David Schwed is the COO of SVRN, an AI infrastructure company focused on building decentralized, sovereign intelligence systems that empower users with data privacy and autonomous computational power.

The post AI security’s ‘Great Wall’ problem appeared first on CyberScoop.

The phrase “Move fast and break things” is a guiding philosophy in the technology industry. The phrase was coined by Meta CEO and founder Mark Zuckerberg more than two decades ago: an operational directive for Facebook developers to prioritize speed and innovation even at the cost of stability. “Unless you are breaking stuff,” Zuckerberg told Business Insider in a 2009 interview, “you are not moving fast enough.”

But Zuckerberg’s call was heard well beyond Facebook’s offices. The tech industry has embraced the philosophy for close to two decades, with benefits that are visible all around us: from Tik-Tok influencers, to contactless mobile payments, self-driving taxis, and AI-powered glasses.

Practically, however, the culture of “move fast and break things” produced firms that prioritize fast release cycles and feature development over software security and resilience. They move fast and make broken things: vulnerable and poorly designed applications, services and devices that are preyed on by cybercriminal groups and hostile nations. Consider the China-backed APT groups targeting both known and “zero-day” flaws in on-premises Microsoft Sharepoint instances in 2025 and Ivanti VPN devices in 2023. Those campaigns led to the compromise of hundreds of organizations globally, including U.S. federal agencies and critical infrastructure operators.

Then there was the campaign by the China-backed threat actor UNC6395 who targeted customers of Salesforce using OAuth tokens stolen from the third party application Salesloft Drift to exfiltrate large volumes of data from hundreds of Salesforce instances.

These incidents highlight two key features of today’s cyberthreat landscape. First, attackers exploit older applications with legacy code that contains high-severity security vulnerabilities. Second, they target large, complex cloud platforms like Salesforce by compromising vulnerable third-party integrations, software dependencies, and poorly managed APIs.

This problem is compounded by a dangerous assumption: that software suppliers are trustworthy and secure. This mindset is outdated. In the past, supply chain attacks were rare, development cycles took months or years, and applying patches quickly was the gold standard. Today, in the “move fast” era, code can go from development to production in days, hours, or even seconds.”

Consider the recent Trust Wallet breach. In December, the cryptocurrency application vendor disclosed that hackers stole approximately $8.5 million in crypto assets through a compromised Google Chrome extension. The root cause was a November outbreak of the Shai Hulud registry-native worm, which leaked Trust Wallet developers’ GitHub credentials. With these credentials, attackers accessed Trust Wallet’s browser extension source code and the Chrome Web Store (CWS) API key, the company said in a blog post. This allowed them to upload malicious extension builds directly to the store, bypassing Trust Wallet’s standard security reviews. Within days, Trust Wallet users awoke to find their wallets emptied.

By compromising “pre-blessed” channels like software updates from trusted suppliers or open source projects, criminal and nation-state attackers can extend their reach into sensitive IT environments.

The solution to problems like this starts with recognizing that the “move fast and break things” era must end. As software powers everything from database servers to dishwashers and tractors, vendors must prioritize security to meet market demands and regulatory requirements. This means proving their software is secure. Traditional application security testing tools—like software composition analysis (SCA), static application security testing (SAST), and dynamic application security testing (DAST)—are part of the solution.

However, today’s threat landscape requires software publishers to look beyond appsec’s “usual suspects.” They must test compiled binaries before release to detect tampering or malicious code that typically evades traditional application security tools. After all, that’s what we saw with incidents like the hacks of Solarwinds’ Orion or VoIP provider 3CX’s Desktop App.

Software publishers also need to prioritize code quality, security and transparency. They can do that by establishing ambitious “zero vulnerability” goals that incentivize them to address problems like “code rot” (reliance on old and vulnerable software modules). They must also embrace transparency by publishing bills of materials for their products—including SBOMs (software bills of materials), MLBOMs (machine learning bills of materials), and SaaSBOMs. Knowing what is in the software your organization consumes can be critical to heading off attacks that exploit vulnerable software dependencies or other supply chain weaknesses.

Should tech firms still move fast and innovate? Absolutely. But in 2026, innovation and rapid releases must be balanced with an even greater priority: building secure, resilient technology that protects both vendors and customers from attacks. Instead of “move fast and break things,” we need a new rallying cry: “Make Smart and Safe Things.”

Saša Zdjelar is the Chief Trust Officer (CTrO) at ReversingLabs and Operating Partner at Crosspoint Capital with nearly 20 years of Fortune 10 global executive leadership experience. His CTrO scope includes leadership, oversight and governance of the CISO/CSO function, including product security, as well as partnering with other leaders on corporate and product strategy, strategic partnerships and research, and customer and technology advisory boards, including sponsoring the ReversingLabs CISO Council.

The post We moved fast and broke things. It’s time for a change. appeared first on CyberScoop.

At Rapid7, we track a wide range of threats targeting cloud environments, where a frequent objective is hijacking victim infrastructure to host phishing or spam campaigns. Beyond the obvious security risks, this approach allows threat actors to offload their operational costs onto the target company, often resulting in significant, unwanted bills for services the victim never intended to use.

Rapid7 recently investigated a cloud abuse incident in which threat actors leveraged compromised AWS credentials to deploy phishing and spam infrastructure using AWS WorkMail, bypassing the anti-abuse controls normally enforced by AWS Simple Email Service (SES). AWS SES is a general-purpose, API-driven email platform intended for application-generated email such as transactional notifications and marketing messages. This allows the threat actor to leverage Amazon’s high sender reputation to masquerade as a valid business entity, with the ability to send email directly from victim-owned AWS infrastructure. Generating minimal service-attributed telemetry also makes threat actor activity difficult to distinguish from routine activity. Any organization with exposed AWS credentials and permissive Identity and Access Management (IAM) policies are potentially at risk, particularly those without guardrails or monitoring around WorkMail and SES configuration.

In this post, we analyzed a real-world incident observed by our MDR team in which threat actors abused native AWS email services to build phishing and spam infrastructure inside a compromised cloud environment. We will reconstruct the attacker’s progression from credential validation and IAM reconnaissance to bypassing Amazon SES safeguards by pivoting to AWS WorkMail. Along the way, we highlight how legitimate service abstractions can be leveraged to evade detection, examine the resulting logging and attribution gaps, and outline practical detection and prevention strategies defenders can use to identify and disrupt similar cloud-native abuse.

AWS WorkMail is a fully managed business email and calendaring service that allows organizations to operate corporate mailboxes without deploying or maintaining their own mail servers. It supports standard email protocols such as IMAP and SMTP, as well as common desktop and mobile clients, making it a lightweight, pay-as-you-go alternative for teams already operating within AWS.

To understand the activities performed by threat actors in the incident, it’s important to first introduce several core concepts within AWS WorkMail.

An Organization is the top-level container in WorkMail. It represents an isolated email environment that holds all users, groups, and domains. Each WorkMail organization is region-specific and operates independently, which allows attackers to create disposable, self-contained email infrastructures with minimal setup.

Users represent individual mail-enabled identities within a WorkMail organization. After a user is created using the “workmail:CreateUser” API call, a mailbox can be assigned via a “workmail:RegisterToWorkMail”API call. Once registered, the user can authenticate to the AWS WorkMail web client or connect via standard email protocols and immediately begin sending and receiving email.

Groups are collections of users that can receive email on behalf of multiple members. They are typically used for distribution lists or shared inboxes and can simplify bulk message delivery or internal coordination within a WorkMail organization.

Domains define the email address namespace used by a WorkMail organization (e.g.@example.com). Before a domain can be used, ownership must be verified. This verification process leverages the standard domain verification mechanism of Amazon Simple Email Service, typically via DNS records. Once verified, the domain can be actively used for sending and receiving email, enabling threat actors to operate from attacker-controlled, but seemingly legitimate, domains.

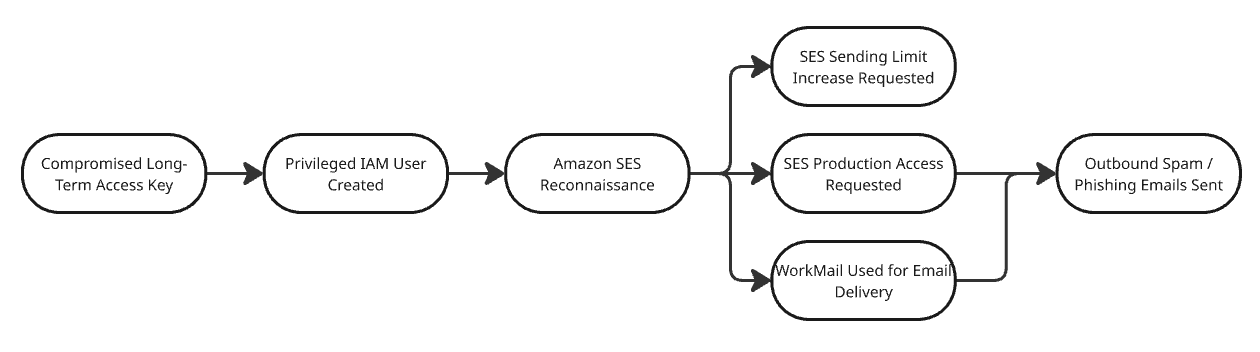

The diagram below contains a graphical representation of the key events carried out by the attackers throughout the attack, starting with initial access actions, continuing through privilege escalation, and ending with the achievement of objectives.

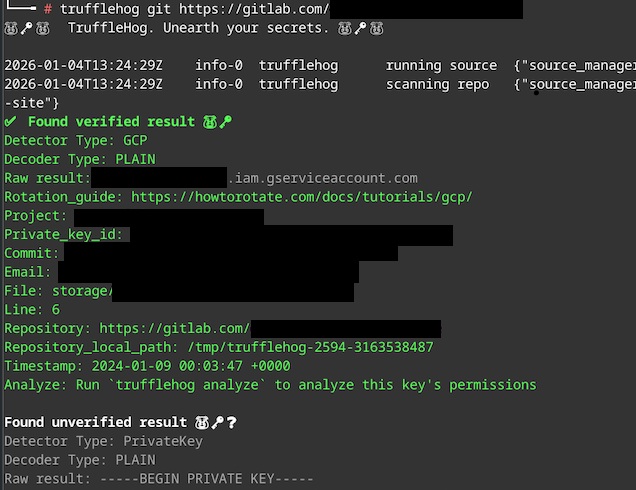

The compromise began with the exposure of long-term AWS access keys. The first indication of malicious activity was an “sts:GetCallerIdentity” API call with the User-Agent set to “TruffleHog Firefox.” This strongly suggests the use of TruffleHog, a tool commonly leveraged by adversaries to discover and validate leaked credentials from sources such as GitHub, GitLab, and public S3 buckets. Rapid7 has frequently observed TruffleHog usage in active campaigns, including activity attributed to groups such as the Crimson Collective.

Several days after this initial credential validation, we observed suspicious activity involving a second IAM user authenticated via long-term access keys. While we cannot conclusively prove that both users were accessed by the same operator, multiple factors suggest they were part of the same intrusion activity. Notably, both authentications originated from the same geographic region, which was anomalous for the victim’s normal operating patterns. Throughout the incident window, access to both accounts was conducted through a rotating set of IP addresses associated primarily with cloud service providers such as Amazon and DigitalOcean. This infrastructure choice is consistent with common adversary tradecraft used to obfuscate true origin and blend into legitimate cloud-to-cloud traffic.

⠀

Following initial access, the first compromised user was used to perform basic environment discovery via native AWS APIs. These attempts repeatedly resulted in AccessDenied errors, indicating that the exposed credentials were constrained by limited permissions. The activity was conducted using the AWS command-line interface (CLI), suggesting hands-on, interactive exploration by the threat actor rather than automated tooling.

After encountering these limitations, the adversary shifted activity to the second set of compromised credentials, which possessed significantly broader permissions. With this user, enumeration became more deliberate and structured. The actor began with iam:ListUsers API calls to understand the identity landscape and then used a technique of intentionally triggering API errors to confirm specific permissions without making persistent changes.

As part of this broader discovery effort, the actor also queried Amazon SES to assess its current configuration and readiness for abuse. Specifically, they executed ses:GetAccount and ses:ListIdentities. These calls allowed the adversary to quickly map the operational status of SES within the account. The ses:ListIdentities API call was used to determine whether any verified identities (domains or email addresses) already existed that could be immediately leveraged for sending mail; none were present at the time. In parallel, ses:GetAccount was used to identify whether the account was operating in the SES sandbox, which would impose strict sending limits and require additional steps before large-scale email campaigns could be launched.

This SES-focused reconnaissance indicates early intent to abuse email-sending capabilities and demonstrates how attackers can efficiently evaluate service readiness using only a small number of low-noise management API calls.

For example, the actor attempted to create an IAM user that already existed. The resulting error response confirmed possession of iam:CreateUser permissions without successfully creating a new entity:

⠀

{

"userAgent": "aws-cli/1.22.34 Python/3.10.12 Linux/5.15.0-113-generic botocore/1.23.34",

"errorCode": "EntityAlreadyExistsException",

"errorMessage": "User with name xxxx already exists."

}Listing 1: Part of the iam:CreateUser CloudTrail log

⠀

A similar validation was performed using iam:CreateLoginProfile. By supplying a password that violated the account’s password policy, the actor received a PasswordPolicyViolationException, confirming their ability to create console login profiles:

⠀

{

"userAgent": "aws-cli/1.22.34 Python/3.10.12 Linux/5.15.0-113-generic botocore/1.23.34",

"errorCode": "PasswordPolicyViolationException",

"errorMessage": "Password should have at least one uppercase letter"

}Listing 2: Part of the iam:CreateLoginProfile CloudTrail log

⠀

After validating the scope of their privileges, the adversary created a new IAM user, attached the AWS managed policy “AdministratorAccess”, and established a login profile to enable AWS Management Console access. This marked a transition from CLI-based reconnaissance to full GUI-based control, providing unrestricted access and setting the stage for subsequent operational activity.

By the end of the discovery phase, the threat actor had established two critical facts:

No verified identities existed in Amazon Simple Email Service (SES).

The account remained restricted by the SES sandbox.

The SES sandbox is explicitly designed to limit fraud and abuse, and its restrictions effectively prevent meaningful phishing or spam campaigns. While an account remains in the sandbox, the following controls apply:

Emails can only be sent to verified identities (email addresses or domains) or the SES mailbox simulator.

A maximum of 200 messages per 24-hour period.

A maximum sending rate of 1 message per second.

These constraints made SES unsuitable for immediate abuse at scale. Rather than abandoning the service, the attacker initiated a process to legitimize higher-volume email sending.

First, they opened a support case with AWS requesting removal from the SES sandbox. In parallel, they requested a substantial increase to the daily sending quota— setting it to 100,000 emails per day —using the servicequotas:RequestServiceQuotaIncrease API call.

⠀

{

"requestParameters": {

"serviceCode": "ses",

"quotaCode": "L-XXXXXX",

"desiredValue": 100000

}Listing 3: Request parameters from RequestServiceQuotaIncrease API call

⠀

During this waiting period, the actor focused on persistence and stealth. Multiple IAM users were created.. These usernames were deliberately chosen to resemble region- or service-scoped automation accounts rather than human operators. To further reduce suspicion during IAM audits, the attacker attached narrowly scoped, SES-only policies to these users instead of broad administrative permissions. This approach allowed them to preserve operational access while minimizing obvious indicators of compromise such as over-privileged identities.

At this stage, the attacker had effectively prepared the account for large-scale email abuse-but they did not wait for AWS approval to proceed.

Rather than remaining idle while SES sandbox removal and quota increases were pending, the attacker pivoted to AWS WorkMail, which offers an alternative email-sending pathway with significantly fewer upfront restrictions.

Using the workmail:CreateOrganization API, the threat actor created multiple WorkMail organizations. They then initiated domain verification workflows for domains designed to appear legitimate and business-like, including:

cloth-prelove[.]me

ipad-service-london[.]com

Domain verification was performed through ses:VerifyDomainIdentity and ses:VerifyDomainDkim, with the calls originating from workmail.amazonaws.com. This highlights an important nuance for defenders: although SES APIs are involved, the activity is driven by WorkMail provisioning rather than traditional SES email campaigns.

Once domain verification was completed, the actor created multiple mailbox users directly within WorkMail, such as:

service@ipad-service-london[.]com

marketing@ipad-service-london[.]com

These accounts served two purposes. First, they established persistence at the application layer, independent of IAM. Second, they provided credible sender identities for phishing and spam operations, closely resembling legitimate corporate email addresses.

There were also AWS directory service events logged by CloudTrail that show new aliases created for the new sender domains, using the victim’s directory tenant:

CreateAlias

AuthorizeAppication

This pivot is particularly impactful because AWS WorkMail does not implement a sandbox model comparable to SES. Emails can be sent immediately to external, unverified recipients. Additionally, WorkMail supports significantly higher sending volumes than SES sandbox limits. While Rapid7 has not empirically validated the maximum throughput, AWS documentation cites a default upper limit of 100,000 external recipients per day per organization, aggregated across all users.

The attacker had two viable options for sending email through WorkMail:

1. Web interface

Emails sent through the AWS WorkMail web client may surface indirectly in CloudTrail as “ses:SendRawEmail” events. These events are generated because WorkMail uses Amazon Simple Email Service (SES) as its underlying mail transport, even though the messages are composed and sent entirely through the WorkMail application.

While these events are not attributed to an IAM principal, they do expose several pieces of valuable metadata within the “requestParameters” field — most notably the sender’s email address and associated SES identity. This allows defenders to link outbound email activity to specific WorkMail users and recently verified domains, even in the absence of traditional application or message-level logs.

One notable limitation of these “ses:SendRawEmail” events is the absence of a true client source IP address. Because emails sent via the WorkMail web interface are executed by an AWS-managed service on behalf of the mailbox user, CloudTrail records the “sourceIPAddress” as “workmail.<region>.amazonaws.com” rather than the originating IP address of the actor’s browser session. This effectively obscures the attacker’s true network origin and prevents defenders from correlating email-sending activity with suspicious IP ranges, TOR exit nodes, or previously observed intrusion infrastructure.

⠀

{

"eventVersion": "1.11",

"userIdentity": {

"type": "AWSService",

"invokedBy": "workmail.us-east-1.amazonaws.com"

},

"eventTime": "2025-12-20T11:26:59Z",

"eventSource": "ses.amazonaws.com",

"eventName": "SendRawEmail",

"awsRegion": "us-east-1",

"sourceIPAddress": "workmail.us-east-1.amazonaws.com",

"userAgent": "workmail.us-east-1.amazonaws.com",

"requestParameters": {

"sourceArn": "arn:aws:ses:us-east-1:123456789012:identity/malicious-organiation[.]com",

"destinations": [

"HIDDEN_DUE_TO_SECURITY_REASONS"

],

"source": "=?UTF-8?Q?Malicious_User?= <marketing@malicious-organiation[.]com>",

"fromArn": "arn:aws:ses:us-east-1:123456789012:identity/malicious-organiation[.]com",

"configurationSetName": "gcp-iad-prod-workmail-default-configuration-set",

"rawMessage": {

"data": "HIDDEN_DUE_TO_SECURITY_REASONS"

}

},

"responseElements": null,

"additionalEventData": {

"SignatureVersion": "4",

"sesMessageId": "0100019b3c4a8bb7-50af951c-fbd4-4610-bc94-c7fc35733699-000000"

},

"requestID": "aff61405-04bd-4969-802a-7ce4d5946949",

"eventID": "c34ed12d-5bec-3fdd-aef9-57ae4313ca88",

"readOnly": true,

"resources": [

{

"accountId": "123456789012",

"type": "AWS::SES::ConfigurationSet",

"ARN": "arn:aws:ses:us-east-1:123456789012:configuration-set/gcp-iad-prod-workmail-default-configuration-set"

},

{

"accountId": "123456789012",

"type": "AWS::SES::EmailIdentity",

"ARN": "arn:aws:ses:us-east-1:123456789012:identity/malicious-organiation[.]com"

}

],

"eventType": "AwsApiCall",

"managementEvent": false,

"recipientAccountId": "123456789012",

"sharedEventID": "xxx",

"eventCategory": "xxx"}Listing 4: SendRawEmail event logged after an email is sent via AWS WorkMail web interface⠀

⠀

While limited, this telemetry can still be valuable for correlating suspicious sending behavior with recently created WorkMail users or newly verified domains.

2. SMTP access

Alternatively, the attacker can authenticate directly to WorkMail’s SMTP endpoint and send messages programmatically. Emails sent via SMTP do not generate CloudTrail events, even when SES data events are enabled, creating a significant blind spot for defenders.

An example Python script used to send email through WorkMail SMTP is shown below:

⠀

import smtplib

from email.message import EmailMessage

# Configuration

SMTP_SERVER = "smtp.mail.us-east-1.awsapps.com"

SMTP_PORT = 465

EMAIL_ADDRESS = "email@example.com"

EMAIL_PASSWORD = "****"

# Create the message

msg = EmailMessage()

msg["Subject"] = "WorkMail SMTP"

msg["From"] = EMAIL_ADDRESS

msg["To"] = "<unverified_email>"

msg.set_content("Email Delivered to an Unverified Email via AWS WorkMail")

# Send the email

try:

with smtplib.SMTP_SSL(SMTP_SERVER, SMTP_PORT) as smtp:

smtp.login(EMAIL_ADDRESS, EMAIL_PASSWORD)

smtp.send_message(msg)

print("Email sent successfully!")

except Exception as e:

print(f"Error: {e}")Listing 5: Example script sending messages via AWS WorkMail via SMTP

⠀

From an attacker’s perspective, this method is ideal: higher volume, immediate external reach, and minimal centralized logging. From a defender’s perspective, it underscores the importance of monitoring WorkMail organization creation, domain verification events, and mailbox provisioning, as these actions often precede phishing activity that will never be visible in CloudTrail.

This incident illustrates how threat actors can abuse higher-level AWS services to deploy phishing and spam infrastructure closely resembling legitimate enterprise usage. While AWS WorkMail is not designed to support bulk email operations, attackers can still leverage it as an interim capability alongside Amazon SES. By abusing WorkMail’s authenticated mailboxes and relaxed upfront controls, adversaries can begin sending lower volumes of email immediately — well before SES is moved out of the sandbox and higher sending quotas are approved. This staged approach allows attackers to establish sender reputation, validate infrastructure, and maintain operational momentum while bypassing many of the friction points intentionally built into SES.

To mitigate this class of abuse, organizations should combine preventive guardrails with focused detection. Where AWS WorkMail is not required, its use should be explicitly blocked using AWS Organizations Service Control Policies (SCPs) to prevent organization creation and mailbox provisioning. In environments where WorkMail is needed, IAM policies should enforce strict least-privilege access and treat WorkMail and SES administration as privileged operations subject to monitoring and approval. Finally, organizations should reduce the likelihood of initial access by implementing secure development and operational practices — such as secret scanning in code repositories, regular key rotation, and minimizing long-term access keys — to limit the impact of credential leakage and prevent attackers from converting compromised credentials into scalable email abuse.

Tactic | Technique | Details |

Initial Access | Valid Accounts: Cloud Accounts (T1078.004) | The attacker authenticated to AWS using exposed long-term access keys validated with sts:GetCallerIdentity |

Persistence | Create Account: Cloud Account (T1136.003) | The attacker created multiple IAM users and AWS WorkMail mailbox users to maintain persistent access |

Privilege Escalation | Account Manipulation: Additional Cloud Roles (T1098.003) | The attacker attached the AdministratorAccess managed policy to a newly created IAM user |

Discovery | Cloud Infrastructure Discovery (T1580) | The attacker enumerated IAM users and assessed Amazon SES configuration and sandbox status via API calls |

Impact | Resource Hijacking: Cloud Service Hijacking (T1496.004) | The attacker abused AWS WorkMail and SES to send high-volume phishing and spam emails from the victim account |

139.59.117[.]125

3.0.205[.]202

54.151.176[.]0

Note: IP addresses 3.0.205[.]202 and 54.151.176[.]0 are Amazon owned IP addresses so care should be taken when applying IP blocks.

InsightIDR and Managed Detection and Response (MDR) customers have existing detection coverage through Rapid7’s expansive library of detection rules. These detections are deployed and will alert on the behaviors described in this technical analysis.

Rapid7 has partnered with ARMO, a leader in cloud infrastructure and application security based on runtime data, to offer Cloud Runtime Security. The new offering, currently in beta, extends our vulnerability and exposure management solution, Exposure Command, into the moment where cloud risk becomes real: while applications and workloads are running. The solution does this with several differentiators that map directly to what security leaders need most: signal accuracy and response speed.

Rapid7 Cloud Runtime Security combines kernel-level observability with AI-powered behavioral analysis to create a continuous, threat-aware defense layer within all cloud environments.

The solution provides:

AI-driven behavioral baselines for container activity. Because services, teams, and software releases create constant change, static policies can quickly become irrelevant and overly noisy. Cloud runtime security augmented by AI helps establish a behavioral baseline of what “normal” looks like for workload activity. This baseline becomes the standard for identifying deviations that indicate active exploits. This becomes even more critical for AI workloads in which runtime is the only place to understand behavior.

Root-cause in every risk finding. When a threat is detected, the platform does not just create noise by firing an alert. Instead, it reconstructs the entire event with root-cause insights by linking application-layer activity (like a SQL injection) to infrastructure-level changes (like a container escape). It also provides a natural-language narrative of the attack, showing exactly what happened, which credentials were used, and which resources were accessed.

Connected dots across the entire cloud ecosystem. Rapid7 Cloud Runtime displays the entire attack story, from cloud and Kubernetes events and clusters APIs, to container and workload processes and individual lines of code. Instead of sifting through siloed, disparate security tools that each present different alerts, teams gain a single source of objective truth for faster forensic analysis.

Deep application-layer visibility. Instantly detect and respond to common attacks, including SQL injections, command injections, local file inclusion (LFIs), and server-side request forgery (SSRF) that regular endpoint detection and response (EDR) tools overlook because their visibility is limited to the host and process level.

Orchestrated automated response to detected anomalies. Detection is only part of the full battle. Speed is the difference between a contained event and a disruptive, expensive data breach. The solution automatically terminates malicious processes, pauses compromised containers, isolates namespaces, or blocks egress to prevent an attacker’s lateral movement.

Rapid7 Cloud Runtime Security enables orchestrated automated response when anomalies are detected, enabling teams to quickly mobilize and contain threats.

Chaos is the natural state of cloud environments, where instances frequently shut down and containers constantly change. In these environments, chaos isn't a deficiency, but an inherent characteristic of distributed systems. Containers spin up and down constantly, deployments change multiple times per day, images get rebuilt and redeployed, identities and permissions drift, and workloads inherit misconfigurations at scale

Traditional vulnerability management (VM) was designed to protect static, on-prem technology architectures. Periodic scans, CVSS scores, and reactive patching have been effective here, but point-in-time snapshots and reactive remediation strategies collapse in dynamic, highly-distributed cloud environments for the following reasons:

Blind spots. Ephemeral cloud resources can spin up, perform a task, and disappear in minutes. If a vulnerable container exists for only 10 minutes between a scheduled scan, traditional VM tools will miss it and an automated attacker script will find and exploit it in seconds.

Missing context. Network scanners find CVEs, but they often lack contextual awareness. For instance, a ‘critical’ vulnerability may represent a low risk in a library that exists on an isolated container with no internet access. Conversely, a ‘medium’ vulnerability on a public-facing server with an over-privileged IAM role can be a catastrophic exploit.

Misconfigurations. In the cloud, vulnerabilities can live on unpatched software, but also arise from misconfigured systems. Consider a fully patched server that is compromised because of an open S3 bucket or a broad IAM policy. According to Gartner, “through 2026, nonpatchable attack surfaces will grow from less than 10% to more than half of the enterprise’s total exposure, reducing the impact of automated remediation practices1.”

AI-driven complexity. AI is accelerating innovation cycles, and as organizations push out more code, AI has introduced several new dimensions to the attack surface. These can include vulnerabilities that trick LLM models into revealing sensitive data or bypassing security controls.

As modern cloud environments are constantly changing, security teams need to know in real time when exposures become active threats. Rather than toiling over a ‘high’ or ‘critical’ vulnerability, they prioritize remediation actions based on the paths that lead to compromise. This is because a vulnerability can become a critical exposure when the conditions around it make it reachable, exploitable, and high impact. Savvy security teams use exposure management solutions to assess whether they are likely to get compromised, then lean on cloud runtime platforms to identify, in real-time, whether they are actively compromised. As a result, the best security programs now run on a “two-engine” model:

Predictive and preemptive with exposure management. This risk-forecasting layer discovers, prioritizes, and guides action on the exposures most likely to lead to material impact. Organizations utilize exposure management solutions to identify which exposures should be addressed first, the shortest paths to breach, and the remediation activities that most reduce risk.

Real-time and proactive with runtime security. This threat-reality layer detects anomalous behavior as it happens and supports immediate containment actions. Organizations use runtime security solutions to assess whether an exposure is actively being exploited, the configuration changes that may have led to the exposure, and the actions that need to be taken to contain the threat.

On their own, each part of the engine is valuable, but exposure management without runtime can cause teams to overlook active threats; runtime without exposure context can drown teams in noisy alerts. Together, these solutions enable teams to prioritize what matters most and respond instantly when it becomes active.

Visit our cloud security pages to learn more about how Rapid7 empowers teams to proactively manage risk, accelerate DevSecOps, and enforce compliance across multi-cloud environments.

1 Gartner, Predicts 2023: Enterprises Must Expand From Threat to Exposure Management, Jeremy D'Hoinne, Pete Shoard, Mitchell Schneider, John Watts, December 2022